Biography

I am a Postdoctoral Researcher at the department of Computer Science at Boston University and at the Chair for Statistics and Data Science in Social Sciences and the Humanities at the Ludwig Maximilian University of Munich.

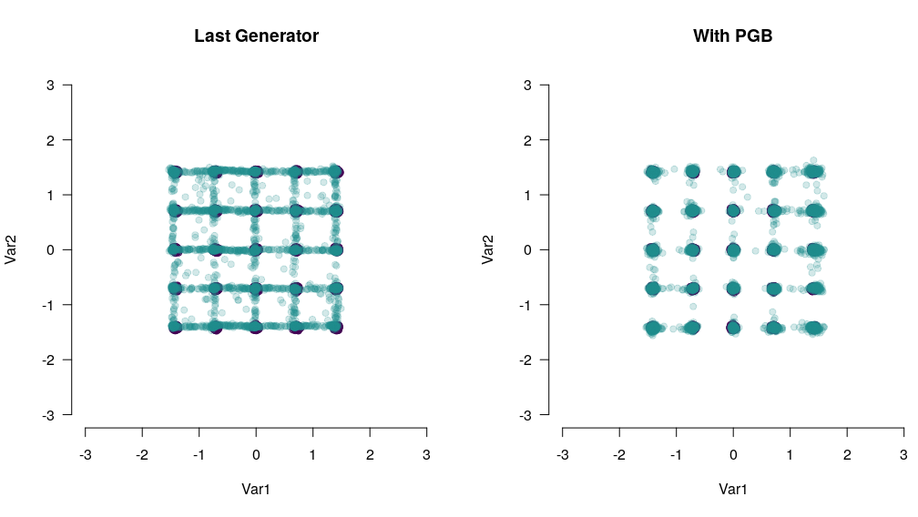

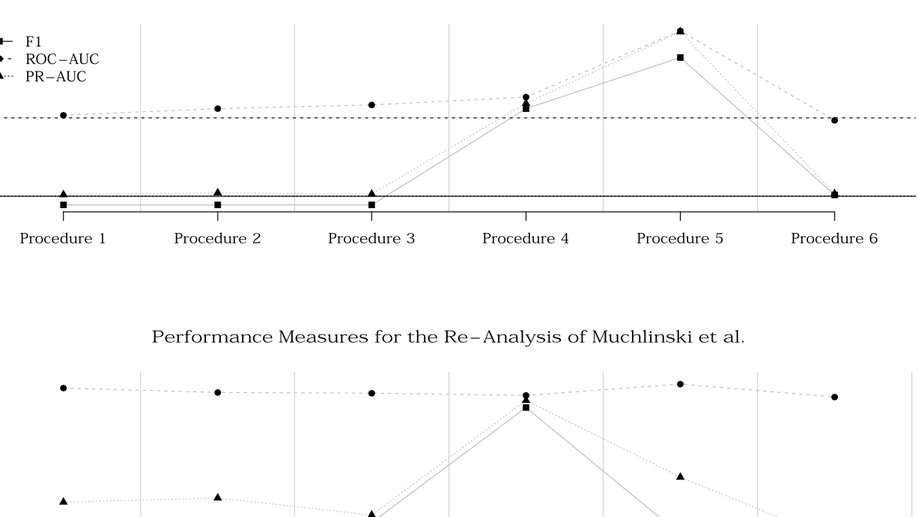

My research focuses on quantitative methodology, where I am specifically interested in the application of deep learning algorithms to social science problems (e.g., multiple imputation of missing data and synthetic data for data sharing). Substantively, I am interested in the prediction of political behavior and the ethical implications of new trends in applied social science research like Big Data and Artificial Intelligence with a focus on privacy. I have published multiple papers in internationally renowned journals and through invited talks I got the chance to communicate the results of my research to international audiences.

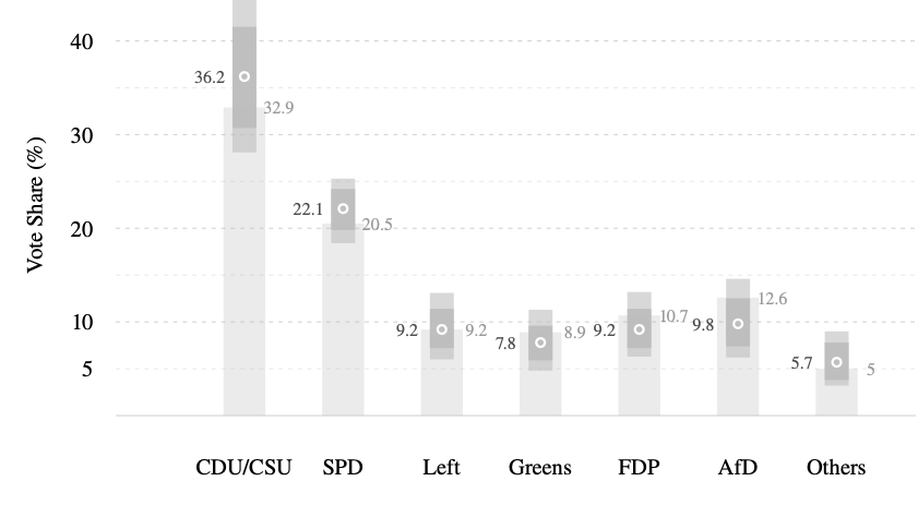

Furthermore, I am co-founder, contributor and the visualizationist of zweitstimme.org – a website that communicates a scientific forecast for German Federal elections to a broad audience.

Interests

- Machine Learning

- Deep Learning

- (Differential) Privacy

- Big Data

- Data Visualization

- Voting Behavior

- (Field-) Experimental Research

Education

-

Ph.D., 2023

Graduate School of Economic and Social Sciences, University of Mannheim

-

M.A. in Political Science, 2016

University of Mannheim

-

B.A. in Governance and Public Policy, 2013

University of Passau